Designing the AI-Ready Classroom: A Framework for What Comes Next

The tools are already in your building. The question was never whether AI belongs in education — it’s whether you’re the one doing the designing. Here’s how to build a classroom AI system that serves students instead of substituting for the humans who teach them.

The Retrofitting Problem Nobody Wants to Talk About

Most schools didn’t build an AI-ready classroom. They built a classroom, then began adding AI to it — one platform at a time, one budget cycle at a time, with varying degrees of intention and almost no overarching design. A reading platform here. An automated grading tool there. A chatbot for student support that got plugged in before winter break because a vendor offered a free trial. Each tool justified individually. None of them considered as a coherent system.

It’s the digital equivalent of wiring a century-old house for electricity without updating the infrastructure. The lights work — until they don’t — and by the time you notice something is wrong, you’re not sure where to start looking. The problem isn’t any single tool. The problem is that the house wasn’t designed for this kind of load.

The prevailing conversation in K–12 education right now frames AI readiness as a technology acquisition problem. District leaders debate procurement timelines. IT departments argue over data interoperability. Teachers wonder when the professional development that was promised in September will finally materialize. And in homeschool households, parents are navigating a marketplace of AI-powered curriculum tools without a guide, a framework, or a map.

Everyone is waiting for someone else to make the first real design decision. And in that vacuum, the vendors are deciding for them.

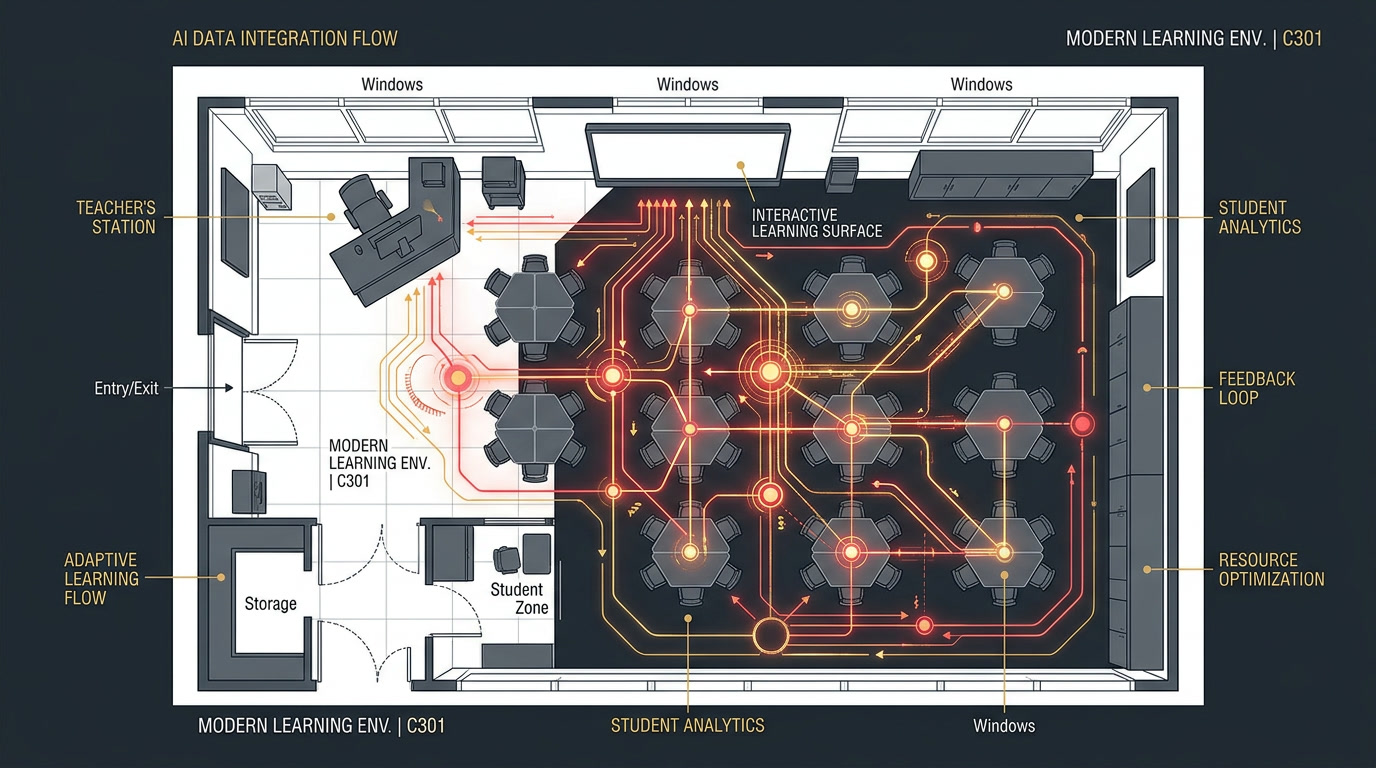

This is the fourth and final installment of The AI Classroom Stack series. In Part I, we mapped the five-layer infrastructure — LMS platforms, adaptive learning tools, automated grading, learning analytics, and teacher and admin AI — and showed how these tools were quietly assembling into something more interconnected than any of us voted for. In Part II, we traced what happens when that system starts making decisions on its own: the recommendation engines, the default effect, the accountability gap. In Part III, we went deeper into the question of data and power — who owns the algorithm, who benefits from it, and who bears the cost when it’s wrong.

Now, in Part IV, we’re building something. Not in the abstract sense of “AI should be used ethically” — we’ve all read that white paper. We’re building a practical framework for how schools, classrooms, and homeschool households can stop retrofitting and start designing. There’s a meaningful difference between those two words, and the gap between them is where most educational AI policy currently lives.

Those three numbers tell a story. Nearly two thirds of teachers are using AI tools right now — a near-doubling in adoption in a single year. Yet only a third of them have any policy guidance at all from their school or district on how to do so. And the market funding those tools has no obligation to wait for governance to catch up: it is on pace to grow from $5.88 billion in 2024 to $32 billion by 2030. The design decisions are being made. The question is who’s making them (EdWeek Research Center, 2025; RAND, 2025; Grand View Research, 2024).

What’s Actually Happening: The Shift from Tools to Systems

The most significant change in educational AI over the past 18 months isn’t any single tool — it’s the emergence of integrated AI ecosystems. Individual platforms that were once standalone are now communicating. Data that once lived in siloed applications is being shared across vendor networks. And the cumulative intelligence of those systems is beginning to shape instructional decisions in ways that were previously the exclusive domain of human educators.

To understand what that means practically, it helps to understand a few key terms. Interoperability refers to the ability of different software systems to exchange and make use of each other’s data. In the early days of edtech, most platforms were walled gardens — your LMS didn’t talk to your tutoring software; your grading platform didn’t feed your analytics dashboard. That’s changing rapidly. Standards like IMS Global’s Learning Tools Interoperability (LTI) and the Ed-Fi Alliance’s data framework are enabling platforms to share student data in ways that create more powerful — and more opaque — AI systems (IMS Global Learning Consortium, 2024).

Adaptive learning is the mechanism by which these systems personalize instruction. Rather than presenting a fixed sequence of content, adaptive systems use real-time performance data to adjust the difficulty, format, and pacing of learning activities. Carnegie Learning’s MATHia platform, for example, uses a cognitive model that has been calibrated against millions of student interactions to predict misconceptions before a student even makes a mistake (Carnegie Learning, 2025). That’s not a flashy demo feature — that’s a fundamental shift in how the feedback loop between learning and instruction works.

What’s new in 2025 and 2026 is the degree to which large language models are being embedded into these adaptive systems. The tutoring layer of the stack is no longer just branching logic and pre-written feedback — it’s generative AI capable of explaining concepts in a student’s own vocabulary, detecting affective signals in written responses, and adjusting the instructional register in real time. Platforms like Khanmigo (Khan Academy), Tutor AI, and Carnegie Learning’s newest integrations are early versions of what will become a standard layer of the AI classroom stack within the next five years (Mollick & Mollick, 2023).

“Debates about AI and education need to move on from concerns over getting AI to work like a human teacher. The question, instead, should be about distinctly non-human forms of AI-driven technologies that could be imagined, planned and created for educational purposes.”

Neil Selwyn, Should Robots Replace Teachers? AI and the Future of Education (Polity Press, 2019)Researcher Yong Zhao at the University of Melbourne has spent years arguing that educational systems are structured around compliance and standardization in ways that may be fundamentally incompatible with personalized learning — and that AI, applied uncritically, risks amplifying those tendencies rather than dismantling them (Zhao, 2022). That’s a concern worth sitting with. The goal of designing an AI-ready classroom isn’t to replace the current system with a shinier version of the same constraints. It’s to build something better, using the design decisions we actually have control over.

Where Intentional AI Design Is Already Working

There are schools and districts that didn’t wait for a national framework. They designed their own. The patterns that emerge from their experiences — in the research literature, in practitioner case studies, and in the emerging body of AI governance documentation — form the scaffolding of what we can call intentional AI classroom design. It’s worth looking at what that actually looks like in practice.

The Mastery-First Model

Summit Public Schools, a network of charter schools in California and Washington, developed a personalized learning platform called Summit Learning in partnership with Facebook’s engineering team. What’s instructive about their model isn’t the technology — it’s the pedagogical philosophy that preceded it. Summit identified mastery of cognitive skills as the primary goal before selecting or building any tools. The AI layer was designed to serve that goal, not to define it (Wexler, 2019). Their teachers report using the platform’s analytics to inform their instructional decisions rather than to defer to them — a distinction that turns out to be structurally significant.

The Guardrail Framework in Practice

The International Society for Technology in Education (ISTE) published its AI in Education Framework in 2024, built around the principle that AI tools in classrooms should be evaluated along three axes: transparency (can teachers and students understand what the AI is doing and why), equity (does the tool serve all learners, including those with disabilities, English language learners, and students from underresourced communities), and agency (does the tool expand the decision-making capacity of teachers and students, or reduce it?) (ISTE, 2024). Schools that have adopted this framework as a procurement and implementation lens report higher rates of teacher satisfaction with AI tools and more consistent instructional outcomes (ISTE, 2024).

The Homeschool Design Advantage

Homeschool families, interestingly, operate with a structural advantage in AI classroom design that institutional schools don’t have: they’re starting from scratch. A homeschool parent building a learning environment in 2026 isn’t retrofitting AI onto a 30-year-old curriculum map — they’re selecting tools with intention, sequencing them according to their child’s actual learning profile, and evaluating results against goals they defined themselves. The National Home Education Research Institute estimates that approximately 3.4 million U.S. students were homeschooled during the 2024–2025 school year — roughly double Catholic school enrollment and approaching public charter school levels (NHERI, 2026). Watching how the most intentional homeschool households design their AI stack is, in many ways, a preview of what institutional schools should be doing at scale.

Risks and Tradeoffs: When Implementation Precedes Philosophy

The classroom AI stack has a trust problem. Not trust in the sense of “do teachers trust the tools” — surveys suggest they increasingly do, perhaps more than is warranted. The trust problem is structural: when a system operates at a level of complexity that exceeds the ability of its human operators to audit or explain it, trust becomes a proxy for accountability. And proxies fail (O’Neil, 2016).

The most documented risk of unreflective AI adoption in education isn’t the science fiction scenario of robots replacing teachers. It’s a quieter and more mundane failure: the normalization of AI-generated instructional decisions as baseline reality. When a teacher consistently follows the adaptive platform’s suggested intervention without examining the underlying logic, they’re not using AI as a tool — they’re using it as a substitute for professional judgment. The tool becomes the authority. The authority becomes invisible. And when it fails, no one knows whose job it was to catch it (Krutka et al., 2021).

Bias in AI training data is a well-documented concern in the research literature, and educational AI is not exempt. Automated essay scoring tools have been shown to favor certain syntactic patterns associated with standardized academic writing, systematically disadvantaging students whose first language is not English and students who communicate in African American Vernacular English (AAVE) (Madnani et al., 2017). Adaptive math platforms calibrated on data from higher-income suburban districts may be poorly equipped to serve students whose learning histories don’t resemble that dataset. These aren’t edge cases — they’re structural features of how these systems were built, and they will persist unless districts actively audit for them.

Invisible Authority: When AI recommendations become defaults and teachers stop examining the underlying logic, accountability disappears. Design your stack so that every AI-generated decision surfaces with enough context for a human to evaluate it.

Data Enclosure: When student learning data is locked inside vendor ecosystems, schools lose the ability to audit outcomes, switch providers, or own the evidence of their students’ growth. Demand data portability before you sign a contract.

Equity Drift: AI systems calibrated on narrow or biased datasets will consistently underserve specific student populations — quietly, over time, without flagging themselves as defective. Build equity audits into your implementation timeline, not just your procurement checklist.

There is also a philosophical question worth naming directly: what is school for? If the answer is “to produce measurable learning outcomes across a standardized curriculum,” then AI is extraordinarily well-suited to accelerate that process. If the answer includes “to help students develop identity, agency, curiosity, and the capacity to navigate ambiguity,” then the question of what role AI should play becomes considerably more complicated. The tools don’t answer that question. They wait for you to answer it first, and then they optimize for whatever you put in the objective function.

What Teachers Can Do Now: The Design-First Approach

Design-first doesn’t mean design-everything-before-you-start. It means developing clarity about what you’re trying to accomplish before you evaluate whether a tool helps you accomplish it. Here are seven concrete actions any teacher can take to move from passive adopter to intentional designer of their AI classroom stack.

Audit what’s already running. Before adding anything new, make a list of every AI-powered tool currently in use in your classroom — including tools embedded in platforms you use for other purposes. Many LMS systems and productivity apps now include AI features that are on by default. Knowing what’s in your stack is the first act of design.

Name your learning goals before you name your tools. Write down three to five specific outcomes you want students to achieve this semester. Then evaluate each tool in your stack against those outcomes. Tools that don’t connect to those outcomes are noise, not signal — and noise has a cost in cognitive load, data exposure, and instructional time.

Make the AI’s reasoning visible. When an adaptive platform recommends a different assignment for a student, show the student why. When an automated feedback tool flags an essay, review the flagged passage together. Demystifying AI recommendations builds student AI literacy and keeps you in the interpretive loop — which is exactly where a teacher belongs.

Build in deliberate override moments. Schedule time in your planning cadence to review AI-generated recommendations before acting on them. This doesn’t have to be elaborate — a ten-minute check at the start of each week where you compare the platform’s suggested groupings with your own read of the room is enough to maintain your professional authority over the instructional decisions in your classroom.

Teach AI literacy as content. Students who understand how recommendation algorithms work, what training data is, and what it means for an AI system to “make a decision” are better equipped to be agents of their own learning. This doesn’t require a separate unit — it can be woven into existing curricula in language arts, social studies, math, and science. The students in your room today will spend their careers working alongside AI systems. Give them the conceptual tools to work with those systems, not just inside them.

Establish a personal data hygiene protocol. Know which tools collect student data, what data they collect, how long it is retained, and whether it is shared with third parties. Review the privacy policies for the top three tools you use most frequently. If the privacy policy is difficult to read, that is itself a data point worth taking seriously.

Connect with a peer design cohort. The most powerful professional development for AI classroom design isn’t a workshop — it’s a small group of colleagues who meet regularly to share what’s working, what isn’t, and what they’re noticing. That’s a design practice, and it’s available to any teacher willing to schedule the time.

What Leaders Should Be Considering: Building for the Long Stack

For school and district leaders, the design challenge operates at a different scale — but the core logic is the same. You are assembling a system. The question is whether you’re assembling it with intention or by accumulation.

The three-pillar framework — Assist, don’t replace; Suggest, don’t decide; Illuminate, don’t obscure — functions as a procurement filter, an implementation standard, and an ongoing accountability mechanism. It’s simple enough to communicate to a school board. It’s specific enough to apply to a vendor contract. And it’s honest about what we’re actually asking AI to do in classrooms.

The Implementation Roadmap

For administrators and district leaders, moving from aspiration to implementation requires a phased approach. The following roadmap is informed by the RAND Corporation’s analysis of successful technology integration in K–12 settings and adapted for the specific characteristics of AI classroom tools (Pane et al., 2015).

Phase 1 — Inventory and Alignment (Months 1–3): Conduct a full audit of every AI-powered tool currently licensed or in use across the district. Map each tool against the five layers of the AI classroom stack established in Part I of this series. Identify gaps (layers with no tooling), redundancies (multiple tools serving the same function), and conflicts (tools that may be drawing on the same student data in incompatible ways). Cross-reference the inventory against student data privacy policies to identify compliance exposures.

Phase 2 — Framework Development (Months 2–4): Develop a district-level AI governance framework that articulates the principles, decision rights, and accountability structures for AI use in classrooms. The framework does not need to be comprehensive on day one — a five-page document that clearly states what AI tools are permitted to do, what they are not permitted to do, and who is responsible for monitoring compliance is worth more than a 50-page policy that no one reads. Involve teachers, students, and families in the development process.

Phase 3 — Pilot and Evidence Building (Months 3–9): Select two to three tools that score well against the Assist/Suggest/Illuminate framework and pilot them in defined classrooms with defined metrics. Collect both quantitative outcomes data (learning measures, time-on-task, grading efficiency) and qualitative practitioner data (teacher perception surveys, student feedback, equity observations). Use the pilot data to refine your framework and inform broader rollout decisions.

Phase 4 — Scaling with Guardrails (Month 9 onward): Expand adoption of validated tools with explicit implementation supports — professional development, coaching, and peer learning structures that build teacher capacity rather than just vendor product familiarity. Establish an annual AI stack review cycle that revisits the inventory, updates the governance framework, and evaluates whether the tools in use are still serving the educational goals they were selected to serve.

Common Mistakes to Avoid

In the research literature and in practitioner accounts of AI implementation in education, several failure patterns appear with enough regularity to be worth naming directly. Mistake one: procuring for efficiency before procuring for learning. The AI tools that are easiest to justify to a school board are the ones that save time — automated grading, scheduling assistants, administrative chatbots. Those are legitimate gains. But if efficiency is the primary procurement criterion, you end up with a stack that is very good at doing existing things faster, without interrogating whether those existing things were worth doing in the first place.

Mistake two: treating professional development as product training. When a district’s “AI PD” consists primarily of vendor-led sessions on how to use a specific platform, teachers develop competency with that tool but not with AI-in-education as a practice. When the platform changes — and it will change — they’re back to square one. Professional development for AI-ready educators needs to build conceptual fluency: how these systems work, what their limitations are, and how to maintain professional authority in a context where the systems are designed to be persuasive.

Mistake three: leaving families out of the design process. Student data governance is a community concern, not just a compliance concern. The families of the students in your building have a legitimate stake in how their children’s learning data is collected, used, and protected. Districts that treat family engagement as a communication step at the end of an implementation process — rather than a design input at the beginning — consistently face more resistance, less trust, and more political friction than those that build community voice into the framework from the outset (Selwyn, 2022).

A Forward-Looking Close: Are We Paying Attention?

Four posts ago, this series opened with a simple observation: there is a second teacher in your classroom, and it doesn’t need sleep. By now, we know that second teacher has a few more things to say about it. It makes recommendations. It keeps records. It shapes sequences. It works inside systems its operators don’t fully understand, serving goals its developers partially defined and the market heavily influenced. It is, by almost any measure, the most consequential instructional tool to enter the classroom since the textbook — and we are, in many cases, still treating it like a productivity app.

The technology is going to keep improving. The platforms are going to keep integrating. The data is going to keep accumulating. Those are not possibilities — they are trajectories that are already well underway. The question that remains genuinely open is the design question: will the classrooms of 2030 reflect the values and goals of educators and communities, or the optimization functions of the vendors who filled the gap while we were still deciding?

The answer is still being written. Which means the educators reading this, the administrators scrolling through this on their lunch break, the homeschool parent who found this post through a search they almost didn’t bother making — you’re not observers of a story that’s already over. You’re participants in one that’s still in the first act.

Design it on purpose. Audit it regularly. Keep asking who it’s for. Teach your students to ask the same questions. And never let a tool — however impressive, however well-marketed, however enthusiastically endorsed by the vendor’s customer success team — answer the question that only you can answer: what is this classroom actually for?

That question has always been the teacher’s. AI didn’t change that. It just made it more urgent.

“The future classroom is already here. The only variable is whether we designed it — or whether we inherited it by default.”

JR DeLaney, AI Innovations Unleashed, 2026References

- Carnegie Learning. (2025). MATHia platform: Cognitive model overview. Carnegie Learning. https://www.carnegielearning.com

- EdWeek Research Center. (2025, January). More teachers are using AI in their classrooms. Education Week. https://www.edweek.org

- Grand View Research. (2024). AI in education market size, share & trends analysis report, 2025–2030. Grand View Research. https://www.grandviewresearch.com

- IMS Global Learning Consortium. (2024). Learning Tools Interoperability (LTI) standards overview. IMS Global. https://www.imsglobal.org

- International Society for Technology in Education. (2024). ISTE AI in education framework. ISTE. https://www.iste.org

- Kaufman, J. H., Woo, A., Eagan, J., Lee, S., & Kassan, E. B. (2025). Uneven adoption of artificial intelligence tools among U.S. teachers and principals in the 2023–2024 school year. RAND Corporation. https://doi.org/10.7249/RRA134-25

- Krutka, D. G., Smits, R. M., & Willhelm, T. A. (2021). Don’t be evil: Should we use Google in schools? TechTrends, 65(4), 421–431. https://doi.org/10.1007/s11528-021-00599-6

- Madnani, N., Loukina, A., LaFlair, A., Burstein, J., & Kochmar, E. (2017). Building better open-source tools to support fairness in automated scoring. Proceedings of the First ACL Workshop on Ethics in Natural Language Processing, 41–52.

- Mollick, E., & Mollick, L. (2023). Assigning AI: Seven approaches for students, with prompts. SSRN Working Paper. https://doi.org/10.2139/ssrn.4475995

- National Home Education Research Institute. (2026). How many homeschool students are there in the United States during the 2024–2025 school year? NHERI. https://nheri.org

- O’Neil, C. (2016). Weapons of math destruction: How big data increases inequality and threatens democracy. Crown.

- Pane, J. F., Steiner, E. D., Baird, M. D., & Hamilton, L. S. (2015). Continued progress: Promising evidence on personalized learning. RAND Corporation. https://www.rand.org

- RAND Corporation. (2025, September). AI use in schools is quickly increasing but guidance lags behind. RAND Corporation. https://www.rand.org/pubs/research_reports/RRA4180-1.html

- Selwyn, N. (2019). Should robots replace teachers? AI and the future of education. Polity Press.

- Selwyn, N. (2022). Education and technology: Key issues and debates (3rd ed.). Bloomsbury Academic.

- Wexler, N. (2019). The knowledge gap: The hidden cause of America’s broken education system — and how to fix it. Avery.

- Zhao, Y. (2024). Artificial intelligence and education: End the grammar of schooling. ECNU Review of Education, 7(3). https://doi.org/10.1177/20965311241265124

Additional Reading

- Dede, C., & Richards, J. (Eds.). (2020). The 60-year curriculum: New models for lifelong learning in the digital economy. Routledge.

- Holmes, W., Bialik, M., & Fadel, C. (2019). Artificial intelligence in education: Promises and implications for teaching and learning. Center for Curriculum Redesign.

- Pedro, F., Subosa, M., Rivas, A., & Valverde, P. (2019). Artificial intelligence in education: Challenges and opportunities for sustainable development. UNESCO.

- Selwyn, N. (2019). Should robots replace teachers? AI and the future of education. Polity Press.

- U.S. Department of Education, Office of Educational Technology. (2023). Artificial intelligence and the future of teaching and learning: Insights and recommendations. U.S. Department of Education. https://www.ed.gov

Leave a Reply