The AI Classroom Stack is already running in your school.

Here’s a plain-language map of its five layers — and what they’re doing together.

Mapping the AI Classroom Stack

You probably know at least one of the tools in it. You almost certainly don’t know all of them — or what they’re doing together. This is the map you didn’t get at orientation. And it’s long overdue.

The Current Narrative: What Everyone’s Talking About (and What They’re Missing)

Open any teachers’ social media group on a Tuesday morning and you’ll find some version of the same conversation. Someone is furious about AI-generated essays. Someone else has forwarded an alarming headline about AI replacing teachers. A third person wants to know whether the adaptive learning app their district just adopted is “actually AI” or just “fancy flashcards.” And somewhere in the comments, an administrator has posted a reminder about the district’s AI acceptable use policy — which, if you’ve read it, you know is mostly a list of things students shouldn’t do with ChatGPT.

The conversation about AI in education has a focus problem. Not because the individual concerns aren’t legitimate — they are — but because they’re aimed at the visible surface of a much deeper system. The public discourse, shaped by media coverage that gravitates toward drama and novelty, has given educators, parents, and homeschool families a set of talking points about individual AI tools while largely ignoring the architecture those tools collectively constitute.

In 2025 and into 2026, the dominant storylines in education media coverage of AI have been three: the academic integrity crisis triggered by generative AI tools, the promise (and fear) of AI tutors, and the occasional feel-good story about AI enabling learning for students with disabilities. Each of these stories is real. None of them is the whole picture.

Here’s what most teachers and administrators are hearing: AI in education means a chatbot students might use to cheat, an AI tutor that raises efficiency questions, and a policy decision that needs to be made by September. What they are largely not hearing — and what this series is designed to address — is that the AI transformation of education isn’t primarily about any individual tool. It’s about a system that’s already running. And most of the people operating inside it have never seen a map.

Homeschool communities, which have historically been early and enthusiastic adopters of educational technology, are navigating this terrain with a particular kind of risk. Because homeschool families tend to select tools individually — with real intentionality and research — there’s an intuitive sense that the technology choices are understood and controlled. But intentionality at the individual tool level doesn’t automatically translate into understanding at the system level. A homeschool parent using an adaptive math platform, a reading comprehension app, an AI writing coach, and a portfolio tracking system has built an AI classroom stack — whether they’ve ever heard that term or not.

The most common misconception isn’t that AI is dangerous or that AI is magical. The most common misconception is that AI in education is primarily a future question, or a question about individual tools. It isn’t. The system is already here. And understanding it begins with understanding what it actually is.

What’s Actually Happening: Five Layers and How They Connect

Let’s start with a definition — one that will serve as the foundation for everything that follows in this series.

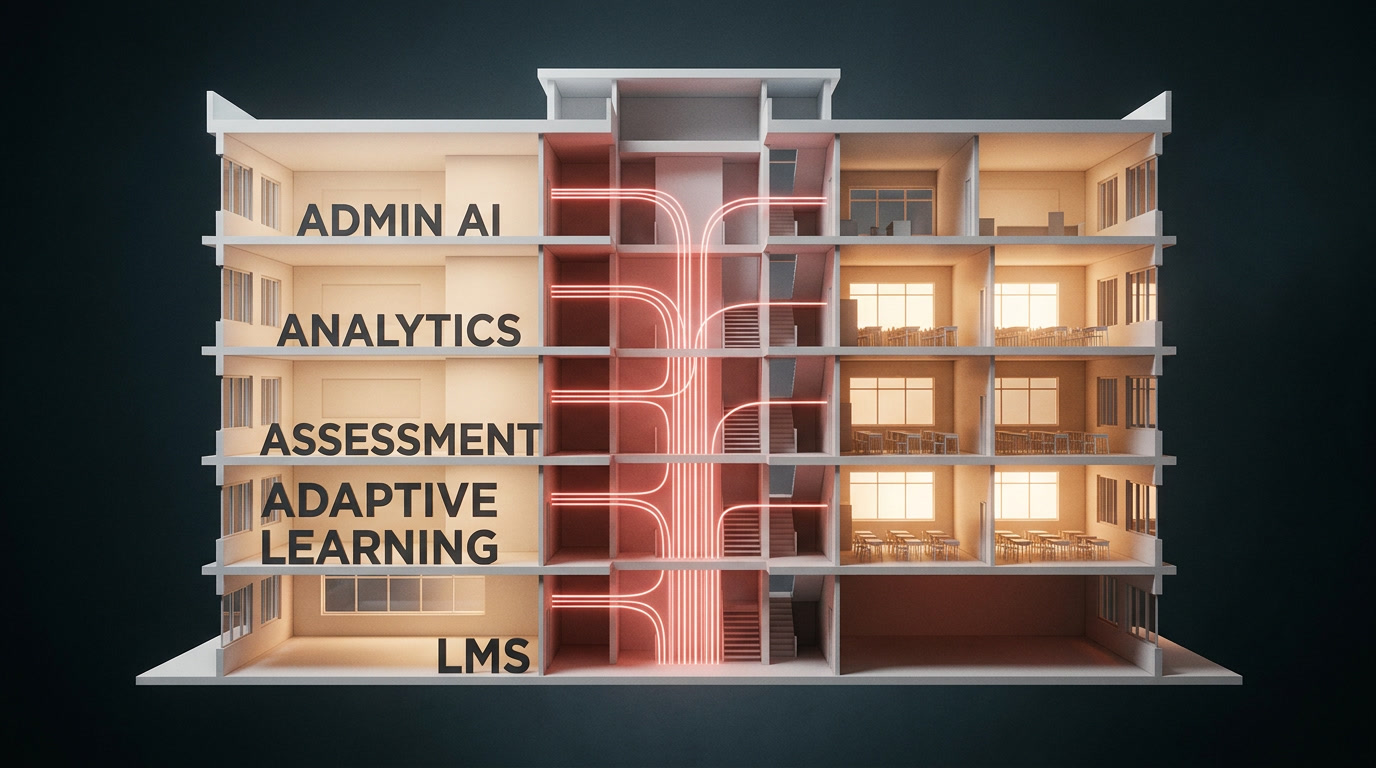

The AI Classroom Stack is the interconnected system of artificial intelligence-powered tools that collectively constitute the operational infrastructure of modern learning environments. It is not one tool. It is not a category of tools. It is a layered architecture — five distinct strata that interact with each other, generate data about learners, and increasingly make recommendations and decisions about learning pathways, content, intervention, and outcomes.

The “stack” framing — borrowed deliberately from software engineering’s concept of a technology stack, the collection of interconnected systems that power an application — is precise and useful. Just as a web application depends on layers of infrastructure that most users never see (databases, servers, frameworks, APIs), the modern learning environment runs on layers of AI infrastructure that most students, teachers, and families never encounter directly. And just as a developer who only knows the user interface of an application has an incomplete picture of how it actually works, an educator who only knows the student-facing tool has an incomplete picture of the environment they’re operating inside.

Here are the five layers, in plain language.

Layer 1 — The Learning Management System (LMS)

The LMS is the foundation layer. Canvas, Schoology, Google Classroom, Brightspace, and PowerSchool Learning organize the logistics of learning: assignments, grades, communication, scheduling, and content distribution. For most teachers and students, the LMS is the primary daily interface — the thing you log into every morning, the thing your gradebook lives in.

For most of the history of educational technology, the LMS was essentially passive infrastructure: a digital filing cabinet with a gradebook attached. That is no longer an adequate description. Modern LMS platforms have integrated artificial intelligence directly into their core architecture. Canvas’s Intelligent Insights feature uses machine learning to surface early warning indicators of student disengagement. Schoology integrates adaptive assessment pathways that branch based on student performance. Google Classroom connects to an expanding ecosystem of AI-powered third-party tools through its APIs. Brightspace offers predictive analytics dashboards that model student risk at the course level. The LMS has become the connective tissue of the stack — the layer that everything else attaches to, and through which data flows from every other layer.

Layer 2 — AI Tutoring and Adaptive Learning Platforms

The second layer is the most visible to students. Khan Academy’s Khanmigo, Carnegie Learning’s MATHia, DreamBox Learning, IXL, Lexia Reading, and Duolingo for Schools are representative examples of a category that has grown dramatically in both capability and adoption. These platforms use machine learning to build individual models of each learner and to personalize the learning sequence in real time.

The distinction between this generation of adaptive learning and the simpler branching logic of earlier educational software is meaningful. A decision-tree approach — if the student gets three correct, increase difficulty — is transparent and predictable. Modern adaptive platforms build probabilistic models of knowledge state: statistical representations of what a student likely knows, doesn’t know, is in the process of learning, and is ready to learn next. Those models are updated with each interaction, and they drive hundreds of micro-decisions per session about which content to present, how to present it, and when to adjust the pathway.

As Rose Luckin, Wayne Holmes, and colleagues argued in their seminal report for Pearson, AI in education reaches its full potential not as a content delivery mechanism but as a system capable of modeling the learner, the subject domain, and the pedagogical context simultaneously — adapting not just to what a student gets right or wrong, but to how and why (Luckin et al., 2016). That potential is increasingly operational in the platforms currently deployed in classrooms.

Layer 3 — Automated Grading and Assessment Tools

Layer three addresses one of teaching’s most time-intensive responsibilities. Gradescope (now part of Turnitin) supports evaluation of handwritten work, code, mathematical proofs, and short written responses. Writable and EssayGrader use natural language processing to assess the structural and mechanical quality of student writing. Formative provides real-time AI feedback as students work through problems. Turnitin’s AI writing feedback tools evaluate argumentation and evidence integration alongside their originality detection function.

For objective assessments, AI scoring is now effectively equivalent to human scoring in accuracy. For written work, the capability gap is narrowing quickly. The practical implications for teachers are significant: a system that provides a student with structured feedback on their essay thirty seconds after submission changes the rhythm of revision in ways that have genuine instructional potential. The question — and it’s worth asking explicitly — is what happens to the teacher’s professional relationship with student work when reviewing AI-generated assessments becomes the primary mode of engagement with student writing. That is a different professional role, with different intellectual demands, and it has arrived largely without discussion.

Layer 4 — Learning Analytics and Dashboards

The fourth layer is the most powerful and the least visible. Learning analytics platforms — Panorama Education, BrightBytes, and PowerSchool Analytics among the most widely deployed — aggregate data from across the other layers: time on task, assessment performance, engagement signals, login frequency, assignment completion rates, behavioral patterns within platforms. They surface that aggregated data to teachers, counselors, and administrators through real-time dashboards designed to provide, at a glance, a systemic view of student performance and risk.

What makes this layer consequential beyond its descriptive function is a shift in analytical mode that has occurred quietly over the last three years. Early learning analytics platforms were primarily descriptive: here is what happened. Current platforms are increasingly predictive: here is what we model is likely to happen. And leading platforms have begun operating in prescriptive mode: here is what we recommend you do about it. This three-stage progression — from describing to predicting to recommending — represents a fundamental shift in the role of data-driven technology in educational decision-making. The system is no longer just reporting. It is advising.

Layer 5 — AI-Powered Teacher and Administrative Tools

The fifth layer is the newest and fastest-growing, and it operates at a level that most discussions of “AI in education” overlook entirely: the tools designed not for students but for the educators and administrators who design the learning environment. Platforms like MagicSchool.ai, SchoolAI, and Eduaide.Ai enable teachers to generate differentiated lesson plans, write IEP accommodations, develop rubrics, create assessment items, and produce parent communications in a fraction of the time those tasks previously required. Teachers who use these platforms regularly report saving between two and five hours of preparation time per week — time that can be redirected toward direct student interaction.

On the administrative side, AI tools are in active use for schedule optimization, predictive enrollment modeling, early dropout identification, budget scenario planning, and predictive staffing. This layer shapes the learning environment with the same directness as any student-facing tool — because it shapes the decisions that teachers and administrators make about that environment. The AI that helps a teacher design an intervention protocol, or helps a counselor draft a support plan, is as much a part of the stack as the adaptive platform a student interacts with at 8:45 in the morning.

Where AI Is Already Being Used: A Tuesday Morning in the Stack

Let’s translate the architecture into lived experience. What does the AI Classroom Stack look like on a Tuesday morning — not in a technology showcase, but in an ordinary school?

A seventh-grader at a mid-sized suburban public school logs into Canvas at 7:48 a.m. Canvas is already running. Over the past two weeks, it has logged a 12% decline in her assignment completion rate and a drop in average time-on-task across her course sections. That data has populated a flag in her advisory teacher’s dashboard: “Academic Risk — Engagement Decline.” The teacher hasn’t seen it yet — they’re managing arrival procedures — but it’s there.

The student opens MATHia for her morning adaptive math session. The platform serves a problem set calibrated to her current knowledge model — slightly more challenging than yesterday’s, because yesterday’s session data indicated she was operating below her optimal challenge level. Over the next 24 minutes, MATHia logs nearly a thousand discrete data points: problem completion sequences, types of errors, time-between-attempts, hint system usage, keystroke timing. Those data points update her learner model and feed back into the LMS analytics layer.

In English, the teacher assigns a short argumentative paragraph through Writable. Students submit. Within 90 seconds, Writable’s AI has assessed every submission: claim clarity, evidence integration, mechanical accuracy, sentence variety. The teacher opens a summary dashboard showing class-wide performance patterns and flagged students needing direct support. She hasn’t read a single submission yet — she’s reviewing an AI-generated synthesis of all of them.

Meanwhile, 600 miles away, a homeschool student in Colorado opens DreamBox for her daily math session. Her mother set a 45-minute limit in the parent portal. DreamBox serves a sequence based on the learner model it has been building for 14 weeks — a model that, unknown to the family, hasn’t been recalibrated since a two-week illness disrupted performance data. The platform is operating on a portrait of the student that no longer fully reflects her. The session proceeds. The model updates incrementally. No alert is generated.

These aren’t speculative scenarios. They’re representative of the operational reality in AI-integrated learning environments right now. The stack is running every morning. The question is how many of the people inside it understand what’s running around them.

“We keep talking about individual tools as if they exist in isolation. But tools don’t stay individual. They integrate, they share data, they build models of the learner. The question isn’t whether you’re using AI in your classroom — it’s whether you understand the system you’re already inside.”

JR DeLaney, AI Innovations UnleashedSal Khan, whose work at Khan Academy has made him one of the most influential voices in educational technology, made the case publicly in his widely watched 2023 TED talk that AI has the potential to give every student access to what he described as the kind of Socratic, personalized tutoring that was historically available only to the privileged few — a responsive, endlessly patient intellectual companion that adapts to each learner’s needs (Khan, 2023). Khan’s vision is grounded in real evidence from Khan Academy’s AI tools. But his framing also illustrates the gap this series keeps returning to: the promise of individual AI tools in education and the complexity of the full system those tools exist within are not the same conversation. Both conversations are necessary.

Risks and Tradeoffs: What the Stack Gets Wrong

Engaging honestly with the risks of the AI Classroom Stack doesn’t require alarmism. The risks are real, they’re documented, and they’re manageable — if they’re understood.

Student Data Privacy

The AI Classroom Stack generates an unprecedented volume of behavioral, academic, and predictive data about minors. Federal frameworks — FERPA for student education records and COPPA for children under 13 — provide a legal baseline, but both were written before AI-generated behavioral analytics existed as a category of student data. The gap between what current law addresses and what AI learning platforms can collect, model, and infer about students is substantial and growing. A 2023 report from the Center for Democracy & Technology found that a majority of school-deployed ed-tech tools collect student data beyond what is necessary for their stated educational function, and that the use of that data by vendors for product improvement, research, and in some cases third-party sharing is common (Center for Democracy & Technology, 2023).

Algorithmic Bias

Adaptive learning systems are trained on historical performance data. If that data reflects existing inequities in educational outcomes — and decades of research confirm that it does — the systems built on it can perpetuate and amplify those inequities. A platform trained primarily on performance data from well-resourced schools may generate learner models, difficulty calibrations, and content recommendations that perform less accurately for students whose learning trajectories don’t match the training distribution. This is not a theoretical concern. It is a documented pattern across AI systems in domains ranging from hiring to healthcare, and there is no structural reason to assume educational AI is immune (Holstein et al., 2019).

Stack Opacity

Most educators, students, and families have no visibility into how the AI systems affecting their learning environments make decisions. When an adaptive platform adjusts a student’s pathway, no explanation is provided. When an analytics dashboard flags a student as at-risk, the algorithmic criteria for that flag are typically proprietary. When an AI grading system assigns a score to a written response, the model’s logic is not available for review or appeal. This opacity is often a deliberate product design choice or a consequence of intellectual property protections — and it creates a fundamental accountability gap. If no one in the learning environment can examine how the system reached a conclusion, no one can meaningfully contest it.

The Philosophical Question: Who Is the Learner When AI Mediates Every Interaction?

Here is the question that hovers over this entire series and that doesn’t have an algorithmic answer: If a student’s learning pathway is designed by an AI, content is selected by an AI, feedback is generated by an AI, performance is modeled by an AI, and all of this is summarized for a teacher through an AI-generated dashboard — at what point does the student’s learning experience belong to the student? This is not an argument against AI-mediated learning. It is a prompt for thinking carefully and explicitly about what we mean by learning, what role human relationship and judgment play in it, and what we are implicitly claiming when we say a student has learned something. These are not questions that can be resolved by the stack. They can only be addressed by the people responsible for operating it.

What Teachers Can Do Now

Understanding the AI Classroom Stack doesn’t require a computer science background. It requires intentionality. Here are five concrete actions any teacher can take — beginning this week.

-

01

Conduct a tool audit. Sit down and list every digital tool your students interact with during a typical school week — including tools you didn’t choose, tools that came with your LMS, and tools students use independently as homework supplements. Most teachers who complete this exercise discover platforms they had forgotten were running. That list is your stack. It is the foundation of every other decision on this list.

-

02

Ask the data question for each tool. For every platform on your list, find out: What data does this tool collect about my students? How long is it retained? Who has access to it? What does the vendor do with it? Your district’s ed-tech coordinator or legal team may have this information in vendor contracts — but you may need to ask directly. The fact that the question is hard to answer is itself important information.

-

03

Integrate AI literacy into what you already teach. You don’t need a dedicated AI curriculum unit to help students develop critical awareness of the systems they’re inside. Every subject area has entry points. A math class can explore how recommendation algorithms work. An English class can analyze how AI writing tools evaluate quality. A social studies class can examine the governance questions around student data and algorithmic decision-making.

-

04

Build a classroom AI norm document with students. Develop shared language — with your students, not just for them — about how AI tools are and aren’t used in your classroom. Frame it as a reflective practice exercise, not primarily as a rule list. Students who understand why they’re making choices about AI engagement are better prepared for a professional world where those choices will be constant and consequential.

-

05

Initiate the systems conversation at your school. The AI Classroom Stack is a school-level phenomenon, not just a classroom-level one. Conversations with your department, your instructional technology team, and your administration have higher leverage than any individual tool decision you make alone. You don’t need to have all the answers to start the conversation. You need to be willing to ask the questions.

What Leaders Should Be Considering

For administrators and district leaders, the AI Classroom Stack presents both a governance responsibility and a strategic opportunity. The following represent areas where leadership attention is most urgently needed.

Procurement as policy: Every tool in the stack entered through a procurement decision. Building AI transparency requirements — what data is collected, for what purpose, with what algorithmic logic — into every ed-tech evaluation process is one of the highest-leverage interventions available to district leadership.

Data governance frameworks: The data the stack generates about students doesn’t disappear when a vendor contract ends. A district data governance policy that inventories what data exists, where it resides, who can access it, and what it can be used for is a fiduciary responsibility, not a technical nicety.

Systems-level professional development: Most teacher training around AI focuses on individual tool use. What’s needed — and what’s largely absent — is professional development that helps teachers understand the stack as a system: how their tools connect to each other, what data flows between them, and what collective decisions they’re enabling.

Family communication: Parents and guardians have a right to understand the AI systems modeling, assessing, and making recommendations about their children. Districts that are proactive and transparent about their AI stack will build the trust necessary for the governance conversations ahead.

Ethical frameworks: The shift from descriptive to predictive to prescriptive AI — from reporting what happened, to predicting what will happen, to recommending what should be done — requires explicit ethical frameworks about what kinds of AI recommendations are appropriate inputs into educational decisions, and what human authority must be retained.

A Forward-Looking Close: The Map Is Just the Beginning

The AI Classroom Stack as it exists in 2026 is not a finished system. It’s a system in rapid development.

The integration that feels novel today — an LMS that feeds an analytics platform that informs an adaptive learning sequence — will be standard infrastructure within three years. The next phase of development is deeper interoperability: platforms sharing learner models across tools in real time, AI systems generating personalized learning pathways that span multiple subjects and learning environments simultaneously, and administrative AI making resource allocation recommendations based on predictive population-level models. The line between “the AI recommends an intervention” and “the AI assigns an intervention” is already blurring in some platforms. That blurring will accelerate.

The question that frames this entire series isn’t whether the AI Classroom Stack should exist. It already does. The question is whether the educators, administrators, and families responsible for children’s learning will engage with it as informed, intentional actors — or as passive recipients of a system whose logic they’ve never examined.

The architecture of learning — how children spend their time, what feedback they receive, what pathways they’re offered, who decides when they’re ready to advance — is among the most consequential infrastructure a society produces. When that architecture is shaped by AI systems, the people operating those systems need to understand them. Not at an engineering level. At a professional level. At a civic level.

The stack is running. This is the map. And understanding the map is where the real work begins.

This post is paired with Episode 1 of The AI Classroom Stack podcast — “The Invisible Classroom: Meet the AI Stack” — available now on Apple Podcasts, Spotify, Amazon Music, and Pocket Casts.

References

- Bransford, J. D., Brown, A. L., & Cocking, R. R. (Eds.). (2000). How people learn: Brain, mind, experience, and school. National Academy Press.

- Center for Democracy & Technology. (2023). Hidden harms: The misleading promises of ed tech surveillance. CDT. https://cdt.org/insights/hidden-harms-the-misleading-promises-of-ed-tech-surveillance/

- CoSN (Consortium for School Networking). (2025). Annual infrastructure survey: AI adoption and readiness in K–12. CoSN.

- Holstein, K., McLaren, B. M., & Aleven, V. (2019). Co-designing a real-time classroom orchestration tool to support teacher–AI complementarity. Journal of Learning Analytics, 6(2), 27–52. https://doi.org/10.18608/jla.2019.62.3

- HolonIQ. (2024). Global EdTech market outlook 2024–2027. HolonIQ Intelligence.

- ISTE. (2025). AI in education: Educator readiness and classroom adoption report. International Society for Technology in Education.

- Khan, S. (2023, March). How AI could save (not destroy) education [TED Talk]. TED Conferences. https://www.ted.com/talks/sal_khan_how_ai_could_save_not_destroy_education

- Luckin, R., Holmes, W., Griffiths, M., & Forcier, L. B. (2016). Intelligence unleashed: An argument for AI in education. Pearson Education. View Report

- Selwyn, N. (2019). Should robots replace teachers? AI and the future of education. Polity Press.

- Williamson, B. (2017). Big data in education: The digital future of learning, policy and practice. SAGE Publications.

Additional Reading

- Reich, J. (2020). Failure to disrupt: Why technology alone can’t transform education. Harvard University Press.

- Watters, A. (2021). Teaching machines: The history of personalized learning. MIT Press.

- Ferdig, R. E., & Pytash, K. E. (Eds.). (2024). K–12 teachers navigating generative AI. AACE.

- Singer, N. (2017, May 13). How Google took over the classroom. The New York Times. View Article

Additional Resources

- AI for Education — aiforeducation.io

- CoSN AI Guidance for K–12 Leaders — cosn.org

- Center for Democracy & Technology — Education Privacy — cdt.org

- ISTE AI in Education Resources — iste.org

- Future of Privacy Forum — Student Privacy — fpf.org

Leave a Reply